Abstract

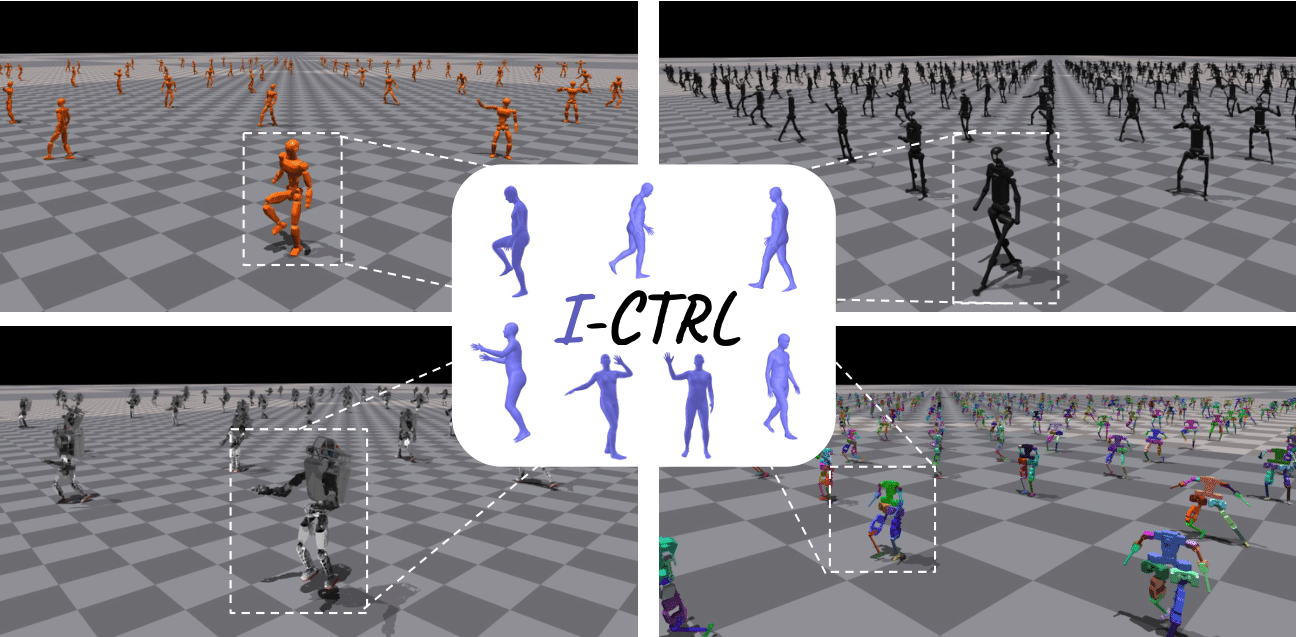

Humanoid robots have the potential to mimic human motions with high visual fidelity, yet translating these motions into practical, physical execution remains a significant challenge. Existing techniques in the graphics community often prioritize visual fidelity over physics-based feasibility, posing a significant challenge for deploying bipedal systems in practical applications. This paper addresses these issues by introducing a constrained reinforcement learning algorithm to produce physics-based high-quality motion imitation onto legged humanoid robots that enhance motion resemblance while successfully following the reference human trajectory. Our framework, Imitation to Control Humanoid Robots Through Constraint Reinforcement Learning (I-CTRL), reformulates motion imitation as a constrained refinement over non-physics-based retargeted motions. I-CTRL excels in motion imitation with simple and unique rewards that generalize across four robots. Moreover, our framework can follow large-scale motion datasets with a unique RL agent. The proposed approach signifies a crucial step forward in advancing the control of bipedal robots, emphasizing the importance of aligning visual and physical realism for successful motion imitation.

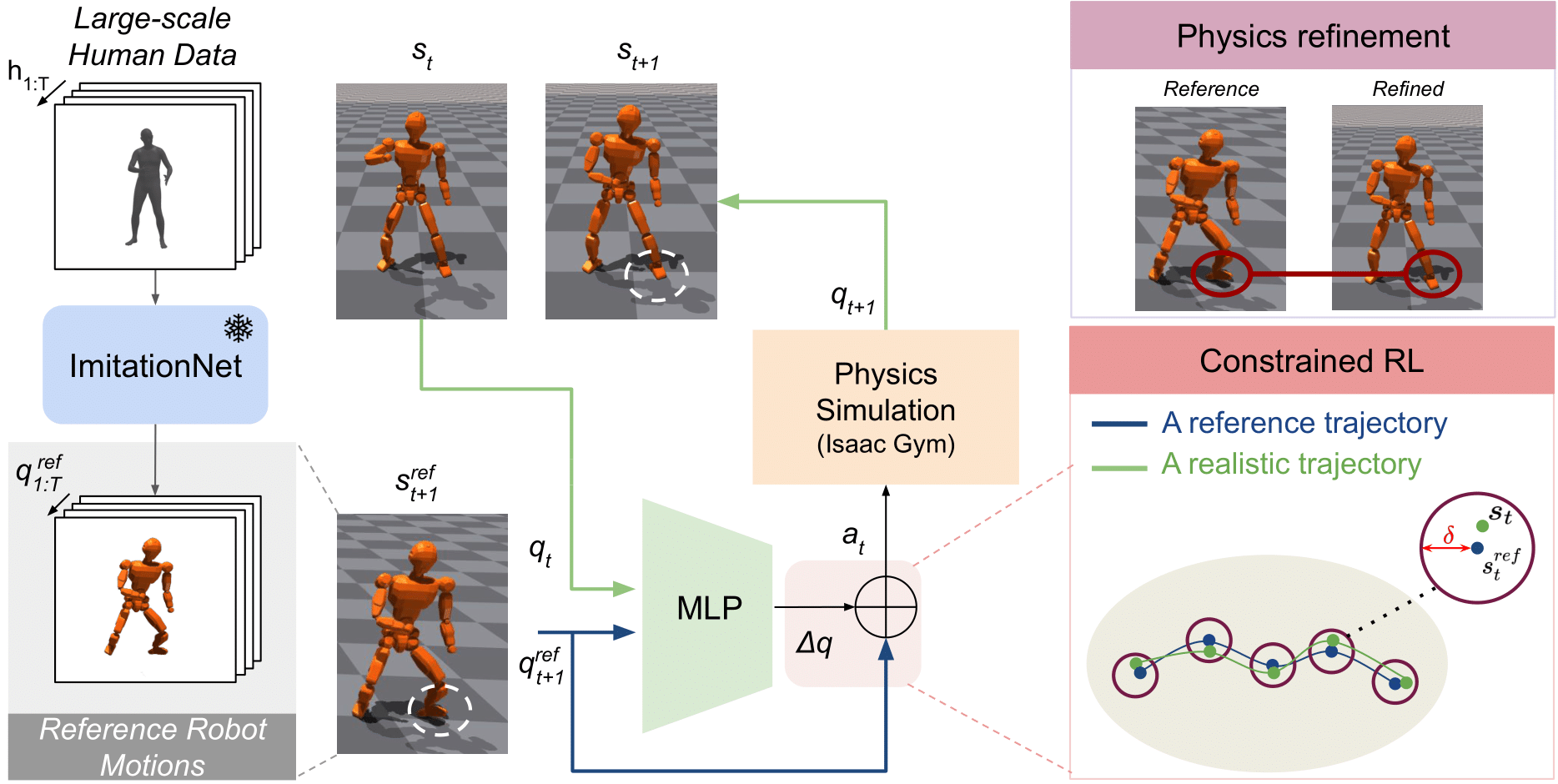

How does it work?

First, we train ImitationNet to retarget human motions into different robots. ImitationNet provides stylistic robot poses that resemble the original human but are not feasible for executing in the physical world. Then, we train our whole-body controller using constrained reinforcement learning to refine those reference poses based on current observation from the physics simulator IsaacGym. .

Human Motion Retargeting into Bipedal Robots

Performance of our RL agent in translating a dynamic human dance motion into four different humanoid robots. The video imitates `a person doing a miscellaneous dance' in BRUCE, Unitree-H1, JVRC-1, and ATLAS robots.

“ATLAS Robot”

“H1 Robot”

“JVRC Robot”

“BRUCE Robot.”

Comparison with the baselines

Comparison of the performance of DeepMimic and AMP versus our approach. Here, the reference refers to the retargeted robot motion from a real human walking using ImitationNet . The reference robot (green) ignores the physics laws for the visualization purpose.

Other Motions

Here we showcase how our generalize model (trained over 10K different motions) can imitate diverse motion commands.

“BRUCE Robot is walking forward in a fast pace”

“H1 is playing basketball”

“BRUCE Robot is boxing”

“JVRC Robot is fighting”

“H1 robot is throwing punches in the air.”

“G1 robot is running counter-clockwise.”

“G1 robot is punching the air.”

BibTeX

@article{2024ictrl,

title={I-CTRL: Imitation to Control Humanoid Robots Through Constrained Reinforcement Learning},

author={Yan, Yashuai and Mascaro, Esteve Valls and Egle, Tobias and Lee, Dongheui},

journal={arXiv preprint arXiv:2405.08726},

year={2024}

}