Abstract

Integrating robots into populated environments is a complex challenge that requires an understanding of human social dynamics. In this work, we propose to model social motion forecasting in a shared human-robot representation space, which facilitates us to synthesize robot motions that interact with humans in social scenarios despite not observing any robot in the motion training. We develop a transformer-based architecture called ECHO, which operates in the aforementioned shared space to predict the future motions of the agents encountered in social scenarios. Contrary to prior works, we reformulate the social motion problem as the refinement of the predicted individual motions based on the surrounding agents, which facilitates the training while allowing for single-motion forecasting when only one human is in the scene. We evaluate our model in multi-person and human-robot motion forecasting tasks and obtain state-of-the-art performance by a large margin while being efficient and performing in real-time. Additionally, our qualitative results showcase the effectiveness of our approach in generating human-robot interaction behaviors that can be controlled via text commands.

How does it work?

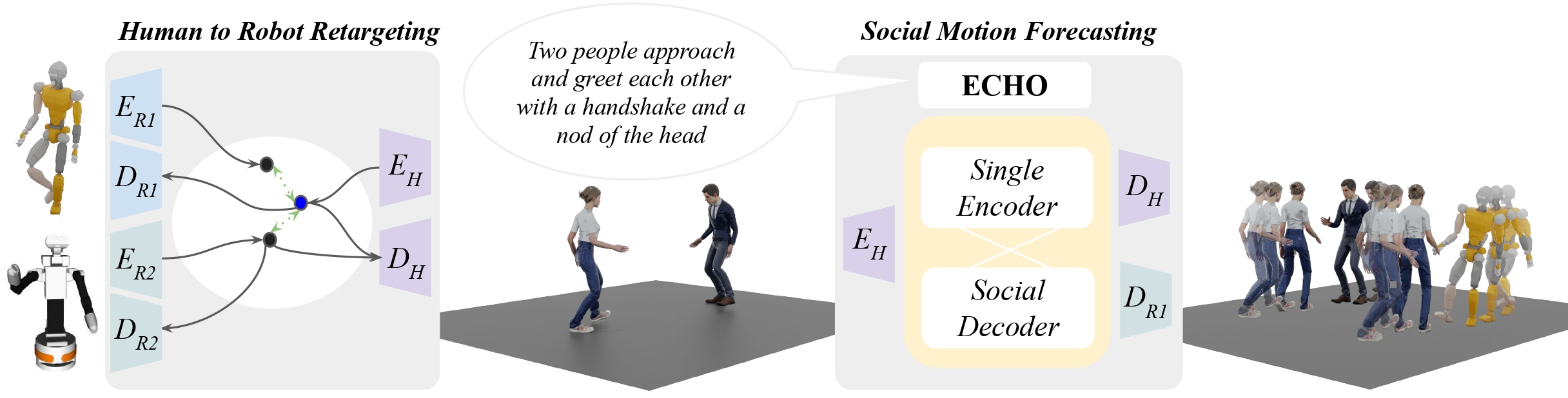

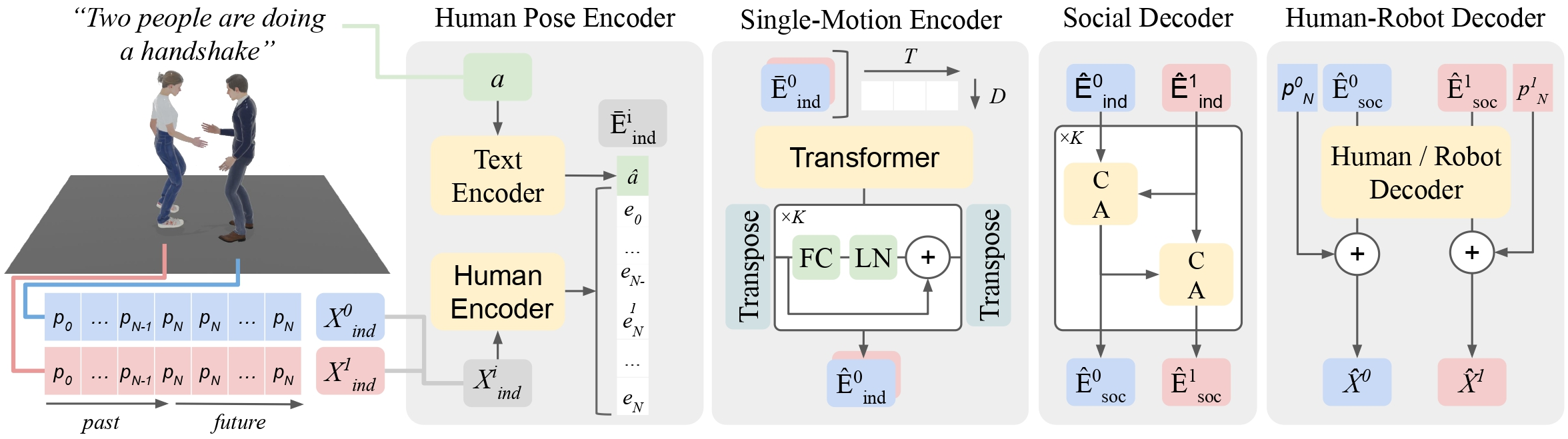

Overview of our ECHO framework. First, we learn how to encode (E) and decode (D) the JVRC-1 robot [1] (in the top left, R1) and the TIAGo++ robot (in the bottom left, R2) to a latent representation shared with a human (H) while preserving its semantics. Then, we take advantage of this shared space in the social motion forecasting task. Our Single Encoder learns the dynamics of single agents given a textual intention and its past observations. Later, we iteratively refine those motions based on the social context of the surrounding agents using the Social Decoder. Our overall framework can decode the robot’s motion in a social environment, closing the gap for natural and accurate Human-Robot Interaction.

Social Human Motion Motion Forecasting

Our model is tested in the InterGen dataset for dyadic motion forecasting in social settings and outperforms on average all state-of-the-art models in multi-person motion forecasting. InterGen is the largest dataset of social motions and contains both daily (e.g. handover, greeting, communications) and more professional (e.g. dancing, boxing) human-human interactions with text descriptions.

“One person seizes the other's right hand utilizing his left hand. The other revolves once to the left, at the same time waves her left hand.”

“These two stroll closely while performing alternating arm swings in sync, then proceed to complete a half-circle around the field.”

“The first person approaches the other and pushes them with their left hand.”

“These two move in a rhythmic manner, then one lifts a leg to attack the other, and the other person jumps upwards and responds with a swift move.”

“The two individuals approach each other, engage in a tai chi combat, and then retreat from each other simultaneously.”

“The first person takes a step back with the right foot while the second takes a step forward with the left foot. ”

“One of the persons takes steps together with the other person, their feet are tied halfway. ”

“Two people stand side by side, making a heart shape with their hands. ”

“One person leads the other in a dance by pulling the latter's right hand with the former's left hand. One person then circles around the head of the other to stand behind them. (part1)”

“One person leads the other in a dance by pulling the latter's right hand with the former's left hand. One person then circles around the head of the other to stand behind them. (part2)”

“One person excitedly drags the other person sitting in the chair to go play, while the other person reluctantly follows along.”

“Two individuals stand facing each other, take a step forward, and shake each other's right hand. ”

“TOne tosses a stuffed disney character over to the other.”

“The second person takes a small step forward towards the second with the left leg, lifts the left foot and puts the right leg forward and then puts it down.”

“One lets go of their right hand, the other lets go of their left hand, and they both swing their arms in circular motions.”

Social motion forecasting for Human-Robot Interaction

We make use of ImitationNet to translate human and robot poses into a shared latent space, and then learn through our ECHO model how to properly generate social interactions from human data. The results show out method is able to generate accurate robotic motions that resemble human's behavior, while never accessing to annotated robot data. Here, Human-Human pair represents the ground truth, while the human-robot pair represents the forecasted human-robot interaction. The following videos show the results in the TIAGo++ and the JVRC-1 humanoid robots.

“The first person approaches the other and pushes them with their left hand.”

“Two individuals raise their hands, touch their left hands, and make a small half circle counterclockwise.”

“One person excitedly drags the other person sitting in the chair to go play, while the other person reluctantly follows along.”

“The first person takes a step back with the right foot while the second takes a step forward with the left foot.”

“One person excitedly drags the other person sitting in the chair to go play, while the other person reluctantly follows along.”

“These two stroll closely while performing alternating arm swings in sync, then proceed to complete a half-circle around the field.”

“Two individuals raise their hands, touch their left hands, and make a small half circle counterclockwise.”

“One of the persons takes steps together with the other person, their feet are tied halfway.”

BibTeX

@article{valls2024echo,

title={Robot Interaction Behavior Generation based on Social Motion Forecasting for Human-Robot Interaction},

author={Valls Mascaro, Esteve and Yan, Yashuai and Lee, Dongheui},

journal={2024 IEEE International Conference on Robotics and Automation (ICRA},

year={2024}

}